Once you have run MDR you are ready to take a look at the results. I usually start with the Summary Table in the Analysis tab. There are four columns in the table. The first column gives the best model for each order examined. There should be four rows for the default settings corresponding to the best 1-, 2-, 3-, and 4-locus models. The Training Accuracy is reported in the second column. This is the average accuracy across the n cross-validation (CV) intervals. This is what is used to choose each of the best models on column one. You can see the second best model, for example, in the Landscape Tab if you have that option turned on. The best model for each CV interval is then applied to the testing portion of the data to derive the Testing Accuracy (TA) in column three. The accuracy estimates are averaged across all CV intervals. The TA is what I use to pick a best model. Ideally, you want to see the TA go up as the order of the model increases and then start going down at some point. The model with the highest TA is what I consider the 'best' overall model. According to the CV analysis, the model with the highest TA is the model that is most likely to generalize to independent data. The reason the TA starts going down again in higher order models is because at some point false positives are added to the model thus decreasing its predictive ability. Overfitting can be seen in the Training Accuracy which continues to rise as additional attributes are added to the model.

As a rule of thumb I like to see a TA of at least 0.55 for a model to be considered interesting. Anything over 0.60 is almost always statistically significant. I rarely see a TA over 0.70 in real data. If you get a TA closer to 1.0 you might check the data. This is highly unusual unless you are studying a mutation with a huge effect size. Remember, a TA of 0.50 is what you would expect if you were to predict case-control status by flipping a coin.

Note that accuracy is the proportion of instances or subjects that are correctly classified as being a case or a control, for example. A more formal definition is (TP + TN)/(TP + TN + FP + FN) where TP are true positives, TN are true negatives, FP are false positives and FN are false negatives. Accuracy works great as long as the number of cases and controls are balanced or equal. We now use balanced accuracy which we have shown is better for datasets that have one class (e.g. controls) that is bigger than the other. Here balanced accuracy is defined as (sensitivity + specificity)/2 where sensitivity = TP/(TP+FN) and specificity = TN/(FP+TN). This gives an accuracy estimate that is not biased by the larger class. We combine this with a threshold for defining high-risk and low-risk genotype combinations that is equal to the ratio of cases to controls in the dataset. Note that accuracy and balanced accuracy are the same when the dataset is balanced. We have a 2007 paper in

Genetic Epidemiology that shows this approach has good power in imbalanced datasets.

The final column of the Summary Table lists the CV Consistency or CVC. This is a statistic that we developed to record the number of times MDR found the same model as it divided up the data into different segments. A true signal should be seen regardless of how you divide the data. Good model usually have a CVC of around 9 or 10 assuming 10-fold CV. The CVC is useful in two ways. First, it can be used to help decide on which is the best model if two or more models have similar TAs. Second, a CVC of less than 10 indicates that the TA is biased because at least one of the CV intervals will have a TA estimated from a different model. In this case I run a forced analysis (see Part 3) with the best attributes to get an unbiased estimate of the TA. I do this with any model that has a CVC less than 10 and report the unbiased estimates.

We highly recommend permutation testing to assess the statistical significance of MDR models. First, you can try a general permutation test that randomizes the case-control labels to derive an empirical distribution of the TA under the null hypothesis of no association. This will tell you whether the model is significant but will not directly provide evidence for interaction. We have a 2010 paper in the

Pacific Symposium on Biocomputing that discusses how to use permutation testing to specifically test the null hypothesis of linearity. A significant p-value here reflects interaction that is independent of any marginal effects. We have a 2009 paper in

Genetic Epidemiology that discusses how to use an extreme value distribution (EVD) to reduce the total number of permutation that you need to run. The MDR permutation testing module (MDRpt) is available for download.

There are up to six tabs that will be visible at the bottom of the Analysis tab that can be used to further assess and interpret your results. Some of these will be mentioned in this lesson. Interpretation will be covered later.

The Graphical Model tab shows the distribution of cases (left bars) and controls (right bars) for each genotype or level combination. The high-risk cells are shaded dark grey while the low-risk cells are shaded light grey. The distribution shown corresponds to the model selected in the Summary Table. This figure can be saved to a file for use in a presentation or manuscript. Note that MDR combines the high-risk and low-risk combinations into a new single attribute using constructive induction. It is the new single attribute that is statistically investigated. You can view the distribution of cases and controls for this single MDR attribute by going to the Attribute Construction tab at the top of the software. Once there, select the SNPs in your best model by holding down the control button and left clicking your mouse. Once the right SNPs are selected push the Construct button. This will add the new single MDR attribute to you dataset. Now do a forced analysis with that single constructed attribute and you will be able to see the statistics for the analysis of that variable along with the graphical model. In our newer papers we are putting the graphical model for the constructed attribute next to the graphical model given in the default output to show the MDR attribute construction process. This is helpful for readers to see what MDR is really doing. We will talk later about how you can include this as part of your analysis process.

The Best Model tab gives some additional statistics for the best model selected in the Summary Table. Once you have selected a best model I think it is acceptable to report the stats on the whole dataset. At this point you are not concerned about overfitting as long as you are picking your best model using the TA as discussed above. Note that the odds ratio is estimated by using the low-risk group as the reference. Also note that the chi-square p-value is probably not accurate since I haven't taken the time to calculate what the actual degrees of freedom should be for an MDR attribute. It is probably greater than one but less than that required to estimate the full effect of the SNPs. Thus, I usually don't report the chi-square values.

The IF-THEN rules is a different way of looking at the MDR model. These are the rules that are used to pool high-risk and low-risk genotypes. I have not seen anyone report these.

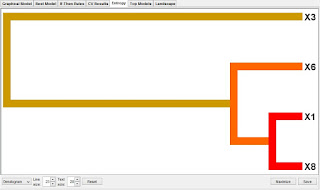

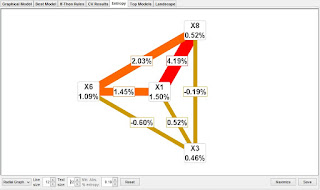

The CV Results tab gives the statistics and the best model identified in each CV interval. Sometimes it is interesting to see what MDR is picking when CVC is less than 10. We will discuss the Entropy tab in more detail in a later post. This is used to statistically interpret your MDR models. The dendrogram, for example, uses information theory and cluster analysis to identify synergistic, independent, and redundant genetic effects. This has become a critical part of an MDR analysis since it provides for the first time real evidence for synergistic interactions or epistasis in an MDR model. Please try to include this in your papers. Otherwise, you don't really know if what you are reporting is really epistasis. This information along with the explicit test of epistasis method described above will convince your readers that you are actually detecting non-additive effects.

If you checked the Compute Fitness Landscape option in the Configuration tab you should see a Landscape tab. This is where you can explore all the models evaluated by MDR. This is very helpful for seeing what the second, third, and even fourth best models are. Try the zoom feature by left clicking and holding the button down while dragging the mouse pointer across the plot to select an area to zoom to. If you zoom far enough in you should find black dots. Mouse over the black dots to see what the specific combination is. Once you find an interesting second best model you can then go and run a forced analysis to see how the TA compares to your best model. Sometimes it is a better predictor. We have done this in several recent papers. I think it is fine to do this without too much concern with overfitting as long as you limit your forced analysis to just a few second or third best models. Also note that there is a Track Top Models option in the Configuration tab that shows how many of the best models to show in the Top Models tab of the Analysis. This is handy so MDR doesn't need to compute the whole fitness landscape.

This post was last updated on January 20, 2013.